orange, interaction, addons

Predictive Modelling with Attribute Interactions

Noah Novšak

May 13, 2022

The Interactions widget is one of the newest additions to Orange. Previously only available in Orange 2, it has been rewritten and is accessible in the prototype add-on. This way, one need not go through the trouble of compiling older versions anymore.

But what does it do?

It computes and displays the interaction between attributes by calculating the mutual information between them and a third target variable. Doing so provides insight into the data at hand and aids in the search for better visualizations and predictive models.

As far as visualizations go, consider Scatter Plot. Connecting Interactions to the Features input allows the user to manually select the projection, similarly to the find informative projections button, but with a little more oomph. Enabling sorting, filtering, attribute selection, and offering a clear view into, where the information is coming from, be it from one single feature or a specific combination.

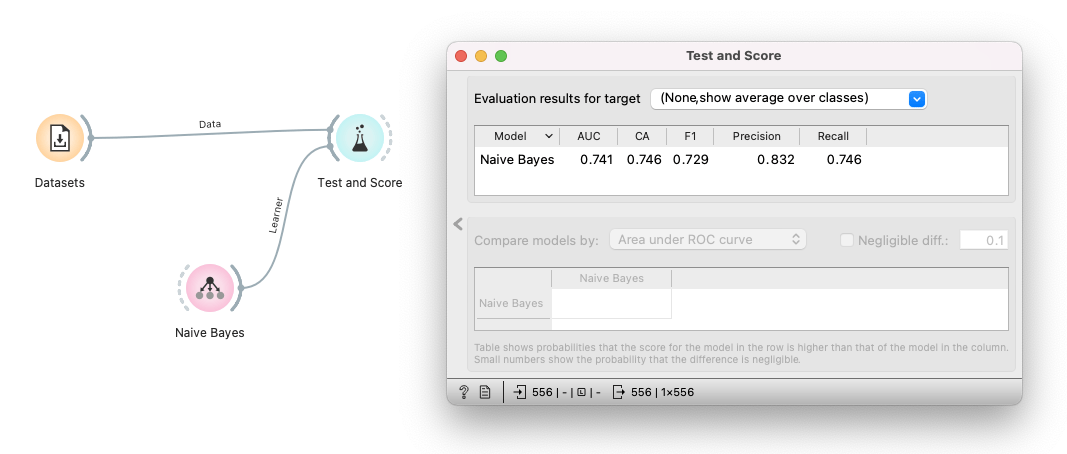

Another, possibly even more intriguing application would be, as mentioned, improving the performance of prediction models. To illustrate, let's take a look at the MONK dataset, readily available directly within Orange, in the Datasets widget. First, we can try and see how well a Naive Bayes Classifier (NBC) can predict the target variable like so.

Taking, for example, the Area Under Curve (AUC) score of 0.741 as a baseline, we can see that, already, our model performs quite well. But we know we can do better than that! We want to see what makes the model tick, so let's take a closer look at our data by utilizing the new interactions widget in our workflow and examining the results.

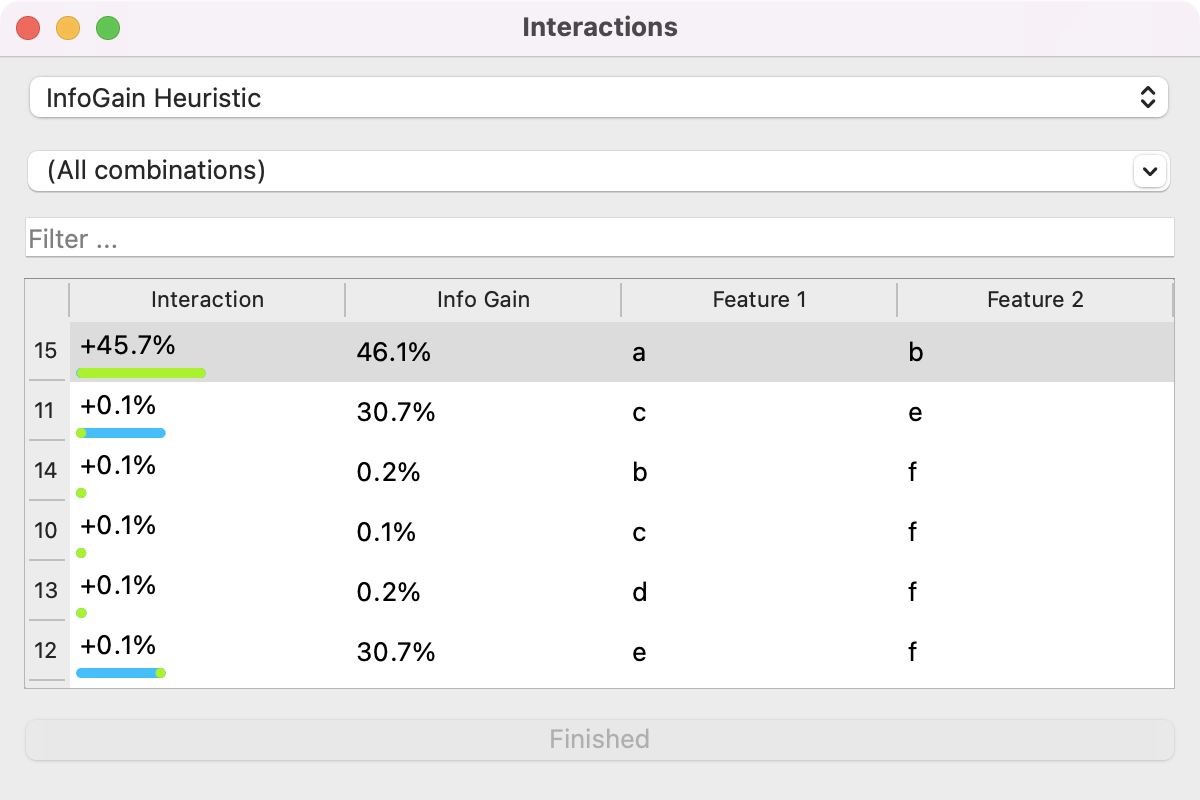

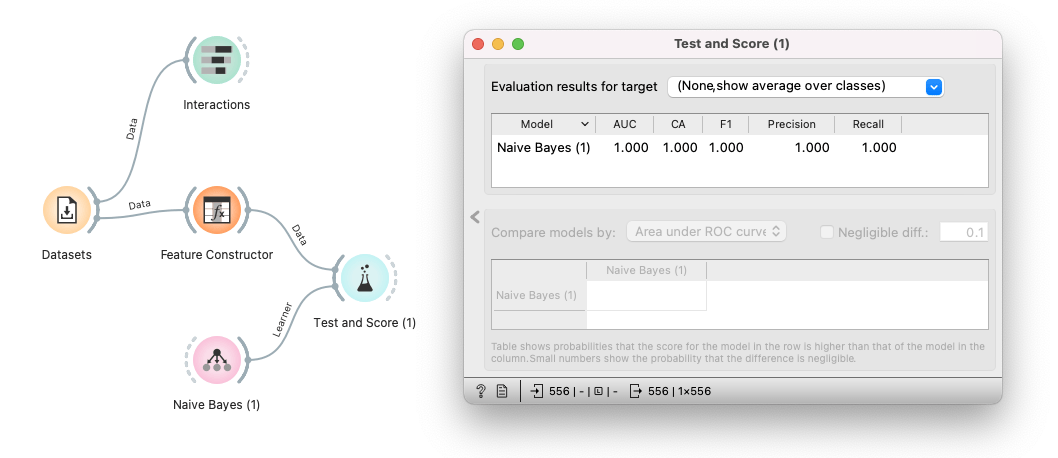

While neither a nor b carries much information independently, their combination tells a great deal about our target variable. With this in mind, we can now use the Feature Constructor widget to combine attributes a and b into a single feature and retrain our model. Applying all these steps then yields a workflow resembling this.

Lo and behold! It looks like the extra trouble has paid off. We have managed to improve our model's performance and have ended up with an AUC score of 1.0 by accounting for the codependence of variables (something models such as NBC lack by definition).

I hope this short display sheds some light on all the possibilities interaction analysis provides and urge you to try it out on some real-world data.